2024 has been super busy personally since starting a new position with UNDP in Laos in February, meaning this newsletter had a backseat for a while. It’s still personal opinions here, not reflecting the views of my employer.

2024 was deemed the ‘elections superyear’, where half of the world’s population has the opportunity to go to the polls. It was also widely touted as the year of the AI election, where new generative AI tools like chatbots and image generators were expected to said to pose new risks to the integrity of free and fair elections, generating convincing fake content at scale, making it easier to manipulate public opinion, mislead voters and ultimately erode trust in democratic processes. Evidence suggests the worst case scenarios haven’t come to pass (albeit with the US election coming up) and the picture is nuanced regarding AI’s impact. However, the ‘second order’ effects of AI’s disruption should concern MPs.

What risks were raised?

Deepfake AI images, video and audio fabricating political content, creating fake endorsements and used to denigrate political opponents.

A ‘liar’s dividend’ whereby real evidence is dismissed as faked by AI.

Micro-targeted personalised political ads using generative AI, based on users' personalities.

Disinformation campaigns delivered at a new speed and scale by bad actors seeking to subvert democratic processes.

Synthetic content ‘flooding the zone’ of social media and other platforms, meaning the public loses trust in what they see and hear and undermining the ability of voters to make informed decisions.

Have we seen this coming to pass in 2024? A few illustrative examples:

Deepfakes: There have been plenty, all over the world.

The US election is looming on the horizon… In the primary season voters in New Hampshire, US, received robocall messages impersonating President Biden urging them not to vote in the primary election.

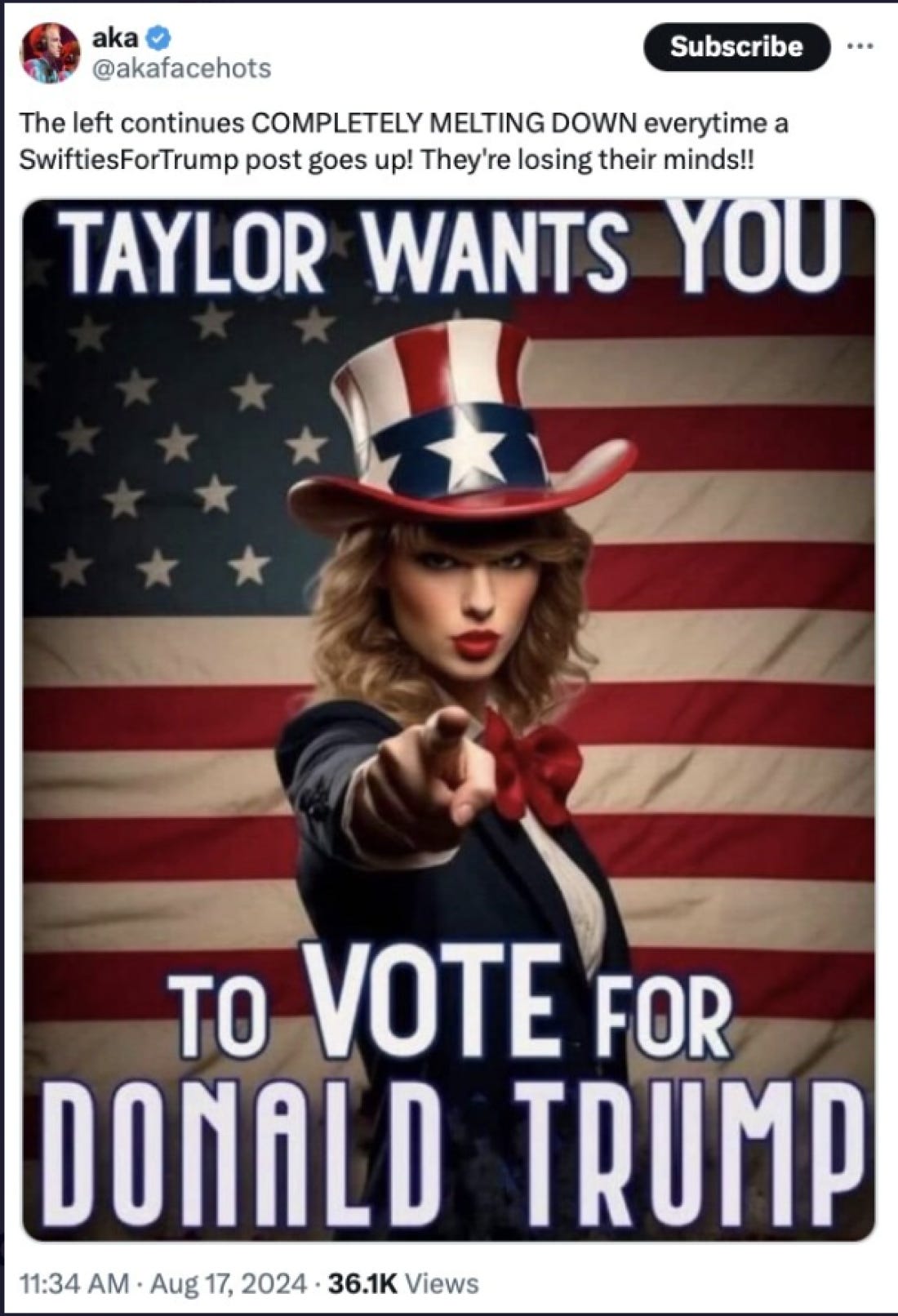

President Donald Trump shared AI-generated images suggesting that pop star Taylor Swift endorsed him. He also claimed images of the crowd at a Kamala Harris rally were faked, an early example of the liar’s dividend.

Matthew Diemer, a Democratic candidate from Ohio, tested AI-backed voice calls to reach voters. These were widely rejected, demonstrating a healthy scepticism by the public for candidates generating faked content.

Last month, the LLM Grok 2 was released from Elon Musk’s X platform, with far fewer guardrails on generating political content than other AI models. Combined with image generator Flux, it’s led to some wild content.

We saw novel uses of AI that point towards what campaigning might look like in future when a super PAC launched an AI chatbot of presidential candidate Dean Phillips using ChatGPT. This violated OpenAI’s prohibition on political use but it wasn’t banned until newspapers reported on it.

Across the world, we saw deepfakes videos used of candidates in Bangladesh saying they were withdrawing in order to suppress voting.

AI has been used to produce content undermining candidates. In Mexico, a deepfake audio of a regional governor threatened withdrawal of social programs if citizens did not vote for her. In Indonesia, a fake clip was circulated online of Vice-Presidential candidate Gibran Rakabuming Raka insulting recipients of government benefits. In Slovakia a deepfake audio clip of the opposition leader saying he would raise the price of beer and buy votes from the Roma minority surfaced on Facebook days before the election. Faked audio is harder to debunk, especially so just before polling.

There were various fake endorsements. In South Africa, a digitally altered version of the rapper Eminem endorsed a South African opposition party ahead of the country’s election in May.

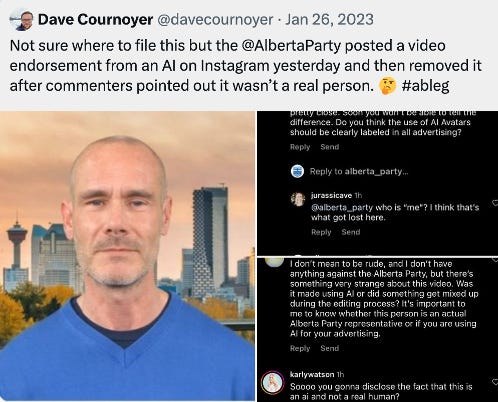

In Alberta, Canada, a party faked human endorsements.

There is strong gendered impact of deepfake content that harms the position of women in political life. An AI-faked picture of Rumeen Farhana, an opposition-party politician in Bangladesh, in a bikini led to uproar on social media.

We’re seeing attempts to trial generative AI for disinformation campaigns. OpenAI identified five online campaigns using ChatGPT to try and manipulate public opinion, for instance boosting anti-Ukraine material in different European languages. The good news is that these campaigns did not gain much traction, but the tools are being tested.

In India, the world's largest democracy saw widespread use of AI in its recent election. There were deepfakes of Bollywood stars, such as Ranveer Singh criticising Narendra Modi. In this video, former Chief Minister of Tamil Nadu M Karunanidhi endorses his son in the 2024 election. He died in 2018, raising pretty clear ethnical and legal questions.

Evidently this constitutes a manipulated information space, but how far is the content produced to deceive is open to question. There were various uses of AI used to entertain or engage voters that were obviously not real, Modi dancing imposed over a video of American rapper Lil Yaghty for instance.

In India, we also saw AI used to translate campaign messages and speeches into minority languages that improved reach to ethnic groups and rural areas. If applied responsibly and ethically, AI could strengthen representative governance by enabling the voices and experiences of harder-to-reach groups to be better heard in parliament and political life.

This points to AI's impact on 2024 elections being nuanced and probably not as severe as some feared. The Alan Turing Institute identified that of the 112 national elections since January 2023, just 19 demonstrated AI interference and there was no evidence of an impact on results compared to expected performance of political candidates based on polling data. While there have been instances of AI misuse, the ethical boundaries of using AI in election campaigns also remain unclear. If the impact is not quite as expected, what is behind this?

Firstly, a more informed public. As AI has entered the mainstream voters have become more adept at identifying fake news and manipulated content. People are more cautious about the sources of their information and many can now distinguish between genuine and AI-created content. Where AI has been used, it has prompted ethical debates and public backlash. However, does this apply in environments with a less robust media environment? We still don’t know. And capabilities are still increasing rapidly – as we saw above with Grok videos.

Secondly, the lack of serious impact of current AI tools. Meta’s latest Adversarial Threat Report acknowledged that AI was being used to meddle in elections but that generative AI offered only "incremental productivity and content-generation gains to the threat actors”.

Thirdly, mass persuasion is very difficult, people rarely change their beliefs based on new information and AI-generated content faces stiff competition in a crowded information landscape, making targeting content difficult.

Overall, challenges to election integrity are not new or down to AI. Disinformation, voter suppression, and manipulation predate AI and continue to be significant. AI has added a new layer to these problems, but is not the sole or primary cause of threats to democratic processes.

However, whether intentionally or otherwise, the impact of generative AI in the information space in likely to have second-order risks to elections and democratic institutions. Confusion over whether AI-generated content is real, a resulting lack of trust in online sources, deepfakes inciting online hate and politicians exploiting AI to create disinformation for electoral gain all damage the democratic system and the long-term consequences remain uncertain. In countries with lower media literacy and a less open media landscape, the impact is likely to be greater.

Moreover, when democratic institutions are repeatedly undermined by disinformation and manipulation, it erodes the norms and principles that sustain democratic governance. It drives cynicism towards people holding public office and weakens trust in institutions, including parliaments.

So what can MPs do?

Prioritize understanding AI technologies and their potential impact. Hold inquiries and expert hearings in parliament. The effects are international and there are increasing events and platforms to share information across borders. In September, I presented at a conference in Singapore organised by the Commonwealth Parliamentary Association and UNDP on deepfakes. The IPU has held webinars for MPs on AI and will seek to pass a resolution at its forthcoming Assembly in October. The Westminster Foundation for Democracy published guidelines for AI in parliaments.

The regulatory framework around AI and deepfakes in particularly will need to be addressed. Study emerging regulatory frameworks on AI. There are some valuable resources out there that collate this information, for instance. Measures may include banning ‘fake humans’ online, enforcing mandatory disclosures when AI-generated content is used, labeling requirements for AI-generated media and and content provenance techniques such as digital watermarks or signatures. MPs can update defamation and election laws to address AI-generated content and introduce strict penalties for malicious use of AI. All of these measures are stronger when regulation is coordinated across democracies.

MPs can work with each other and across parties to push for guidance for political parties on fair use of AI in the election period, and for media outlets on how they should report on incidents. They can raise awareness and empower citizens to be more discerning about the content they consume.

2024 has shown us that while AI poses significant challenges to electoral integrity, its impact is not insurmountable. Lawmakers are all learning to navigate this new landscape. Ultimately it is through strengthening and improving democratic institutions that we will navigate the challenges of AI on elections.