Risks to Democracy from Generative AI: The Public Information Space

How might generative AI impact the information space that underpins democratic societies? And what can MPs do about it?

The rapid pace of AI deployment has raised questions fundamental to the nature and freedom of democratic societies. The public information space is essential to free and open debate, civic engagement and trust in democratic institutions. However, generative AI's ability to produce synthetic text and other media means that in future what we see and hear will be increasingly shaped by AI. What impact might this have? And where is the emerging evidence?

This is a first post looking at AI’s impact on democracy, future posts will cover other areas including elections and public services.

We’ll start by looking at emerging capabilities from generative AI.

The public information space in democratic societies has changed significantly due to social media. AI’s capability to mass-produce synthetic text now presents unique challenges and new vulnerabilities. Firstly, the ability to create false but plausible information at greater scale and speed. Generative AI can be used to automate production of text content including emails, press releases, social media posts and online comments. This could ‘flood the zone’ of our information environment with synthetic text, reducing public trust in what they read online, including from reliable sources. If the public loses trust in what they encounter online, it can compromise how democratic governments inform the public and respond to crises.

Secondly, generative AI gives the ability to craft and automate audience-specific messages. Leveraging demographic information, it has the potential to micro-target content to certain biases, ideologies and expectations through, for example, original articles produced for different user demographics or personalised chatbots targeting individuals. This gives capabilities to generate custom-made disinformation, discussed more below.

Emerging evidence

Research shows that generative AI can produce persuasive news articles that people view as real.

AI generated news websites, ‘content farms’, fake reviewers and spam sites are springing up, using generative AI to create fringe and inauthentic content relating to politics, health, the environment, finance and technology, fabricated events and medical advice.

The UK Electoral Commission has warned that hacked voter data could be used to micro-target AI-generated disinformation.

AI increasingly allows development of realistic, high-resolution ‘deep fake’ images, video and audio. This is often cited as the biggest risk from generative AI, including by industry leaders. A wave of deep fake content can undermine shared facts and empirical evidence on which democracy is based. They can sow informational uncertainty, fuel conspiracy theories and exacerbate social division. Accurate content can also be labelled as synthetic or faked by those considering it unfavourable, a so-called ‘liars dividend’ which undermines credible information. Combating this will require a combined effort by out democratic institutions, regulators, online platforms and AI companies.

Emerging evidence

New tools are available to clone voices using only three seconds of audio. Studies have shown that human’s find it difficult to identify deep fake speech, and automated detectors are still unreliable.

As an example of deep fakes aimed at manipulating public opinion, a deepfake of Bill Gates was produced “revealing” that the coronavirus vaccine causes AIDS; and a video of Joe Biden was produced making transphobic remarks.

The war in Ukraine has seen various AI deep fakes. A deepfake of the mayor of Kyiv briefly tricked the mayors of European capitals during a video call. An albeit rudimentary deep faked video call of former Ukrainian president Petro Poroshenko also targeted Bill Browder, a prominent critic of the Putin regime. A soldier posting videos from the Ukraine war was in reality a deep fake from an account in China.

Deep fakes are increasingly being used for political purposes:

In Jamaica, an MP cited deep fake images aimed at denigrating a party leader.

In the United States, Presidential candidate Ron DeSantis' campaign used a deepfake image of Donald Trump hugging Dr. Fauci to try and discredit him.

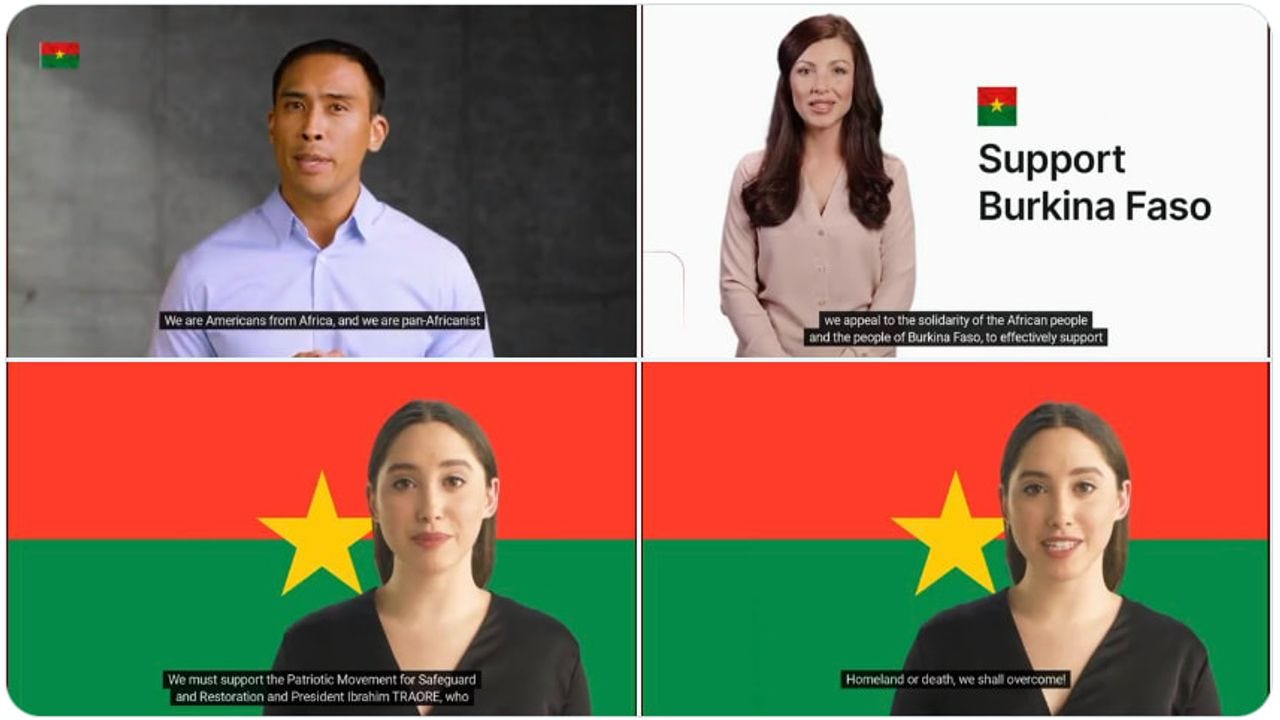

In Burkino Faso, deep fake videos on Whatsapp are being circulated appearing as if American ‘Pan-Africanists’ supported a recent military coup.

In India, a deep faked photograph was used for to discredit protestors and reduce pressure on political leaders.

As an example of the ‘liars dividend’, also in India, a state MP was accused of speaking on tape accusing fellow party members of illegally amassing USD$3.6 billion. The MP called the recording ‘fabricated’ and ‘machine generated’, with deep fake experts finding one recording almost certainly to be real.

There is the risk that politically motivated generated content can target the policymaking system. It could be used for ‘astroturfing’ - creating a sense of support or otherwise by generating fake messages from the public, for example by mass producing text inputs or cloning voice and speech patterns and impersonating calls to lawmakers. AI can produce content that is varied, personal and elaborate, bypassing previous methods of detecting bots. An LLM trained on relevant data could then identify individuals or points in the system to exploit with content - direct communication, public relations campaigns or other points of leverage. For MPs, these abilities could compromise the public engagement process essential for the effective scrutiny of policy and legislation and impact how they receive constituent feedback.

Emerging evidence

In the United States in 2019, a Harvard undergraduate used a text-generation program to submit comments in response to a government request for public input on a Medicaid issue. A subsequent study demonstrated that volunteers were unable to distinguish AI and human-written comments.

In a recent field experiment, 30,000 emails were sent by researchers to 7,132 state legislators in the United States. Half were written by GPT-3 and half by students. The study found that many legislators and their staff did not dismiss the machine-generated content as inauthentic, and that generated content was seen as almost indistinguishable from human content.

The above capabilities can provide new capabilities for disinformation and influence campaigns targeted at democratic societies. Objectives may include:

Attempting to destabilize foreign relations or domestic affairs in rival countries

Promoting allies and denigrating rivals

Pushing certain positions or policies, including highlighting conspiracy theories

But how does generative AI offer bad actors new capabilities?

It can automate the production of propaganda and result in more scalable campaigns.

The cultural fluency of generative AI can make campaigns more persuasive, removing grammatical or stylistic problems.

The ability of chatbots to engage with their audience and tailor messages allows for more personalised campaigns.

And access to publicly available and open source models can open the door to a wider variety of actors to engage in influence campaigns and reduces costs.

The content of campaigns may not need to be highly convincing, just enough to disorient and lead people to distrust the information environment, or to drive anger and societal division.

While these capabilities are new, the bottleneck for influence campaigns remains distributing information rather than generating it, for example with access to verified social media accounts. Fact-checking therefore is likely still the main form of defence.

Emerging evidence

Studies have shown that LLMs can be used to develop misinformation campaigns to persuade parents not to vaccinate their children. In this instance, GPT-4 and ChatGPT outlined a plan, developed messages, and advised on how to personalise and target messages.

This research project demonstrated how GPT-3’s writing could sway readers’ opinions on issues of international diplomacy. The researchers showed volunteers sample tweets written by GPT-3 about the withdrawal of US troops from Afghanistan and US sanctions on China. In both cases, they found that participants were swayed by the messages.

ChatGPT has been shown to produce foreign propaganda and disinformation in the style and tone of the Chinese Communist Party and Russian state-controlled news agencies such as RT and Sputnik. ChatGPT has also been shown to generate more disinformation in Chinese than in English.

Generative AI will give increased capabilities to bad actors. However, the issue of weakened confidence in institutions that might push back – media, judiciary, parliament and regulatory agencies - existed before generative AI hit the mainstream. Combating this necessitates a public awareness drive and informed and active parliamentarians.

Taking action: Based on the risks above, what are some actions that MPs can consider?

Lawmaking role

In new legislation regulating AI, consider:

Mandating labels for AI-produced content

Requiring companies to report on misuses of systems generating synthetic content

Ensuring transparency from AI companies on algorithms and content moderation

Establishing dedicated bodies and audit mechanisms to oversee AI research and application

Mandating verification of social media accounts

Examine and update existing laws as needed. For example:

Laws that regulate online content, hate speech and disinformation

Laws related to impersonation, identity theft and fraud

Broadcasting and press laws

Privacy laws

Laws on political advertising and elections to protect against AI-generated content and targeting

In the budget law, assess funding for:

Public information campaigns to identify synthetic media

Research on tools to detect AI-generated content, eg through public-private partnerships

Oversight role

Submit written or ask oral questions to government, for example about:

Public awareness initiatives on information manipulation

The robustness of public and stakeholder consultation on AI policy and regulation

Measures to verify authentication of communications to government and in public consultation processes

Use inquiries to go into more detail on the impact of generative AI on the public information space. Hold public hearings and call expert witnesses, including AI and social media industry representatives, fact checking organisations, academics and scientists, civil society groups. Use the media to publicise reports to help inform the public of risks.

Representation role

Help boost societal resilience against information manipulation through media literacy, public awareness and education

Hold public meetings, school and university visits on AI literacy, making a special effort to target young people

Partner with local media, universities and civil society groups to help monitor prevalence of synthetic media locally

Monitor potential manipulation of constituent input. Work with staff to help verify sources of incoming communications, especially those seeking to influence policy positions

As a democratic leader

Call for transparency from social media platforms on prevalence of synthetic content and their systems address it

Call out disinformation and misinformation when identified, including partnering with fact-checking organisations

Collaborate with colleagues in international parliaments to share best practices and catalogue and publicise instances of AI-generated disinformation

Further reading and resources

New York Times: Disinformation Researchers Raise Alarms About A.I. Chatbots

Paper: The Technologies Behind Precision Propaganda on the Internet

Deep fake detection organisations:

Newsguard offer tools to identify unreliable AI news sites

Truepic, offering techniques to cryptographically sign and timestamp AI videos

Intel has developed a deep fake detector

Other initiatives identifying deep fakes:

Facebook and Reuters published a course that focuses on manipulated media

The Washington Post released a guide to manipulated videos.

In the US, the government Defence Advanced Research Project Agency (DARPA) is working on technology to identify whether audio or video has been manipulated.